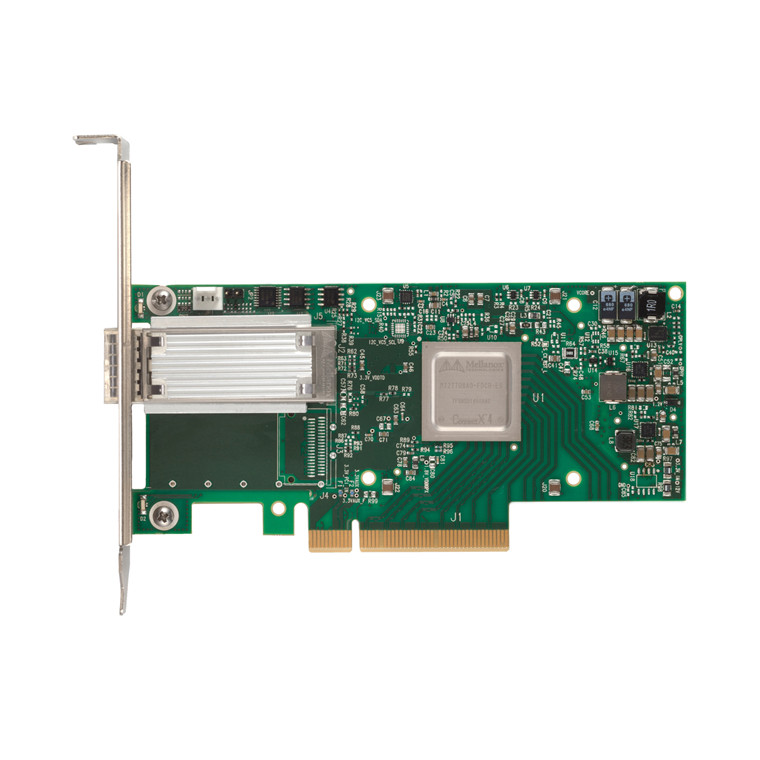

Mellanox MCX413A-GCAT ConnectX-4 EN network interface card, 50GbE single-port QSFP28, PCIe3.0 x8, tall bracket, ROHS R6

Out of stock

Mellanox MCX413A-GCAT ConnectX-4 EN network interface card, 50GbE single-port QSFP28, PCIe3.0 x8, tall bracket, ROHS R6

- Brand: Mellanox

- MPN: MCX413A-GCAT

- Part #: NICMLX1044

- UPC:

- Brand: Mellanox

- MPN: MCX413A-GCAT

- Part #: NICMLX1044

- UPC:

Product is out of stock. View similar products in-stock at PB Tech

Features

Specifications

Reviews

Delivery & Pick-up

Returns & Warranty

Popular Network Cards (NIC)

Mellanox MCX413A-GCAT ConnectX-4 EN network interface card, 50GbE single-port QSFP28, PCIe3.0 x8, tall bracket, ROHS R6

- Brand: Mellanox

- MPN: MCX413A-GCAT

- Part #: NICMLX1044

Product URL: https://www.pbtech.com/product/NICMLX1044/product/NICTPL1001/category/testimonials?testID=2236

Features

I/O Virtualization

ConnectX-4 EN SR-IOV technology provides dedicated adapter resources and guaranteed isolation and protection for virtual machines (VMs) within the server. I/O virtualization with ConnectX-4 EN gives data center administrators better server utilization while reducing cost, power, and cable complexity, allowing more Virtual Machines and more tenants on the same hardware.

Overlay Networks

In order to better scale their networks, data center operators often create overlay networks that carry traffic from individual virtual machines over logical tunnels in encapsulated formats such as NVGRE and VXLAN. While this solves network scalability issues, it hides the TCP packet from the hardware offloading engines, placing higher loads on the host CPU. ConnectX-4 effectively addresses this by providing advanced NVGRE, VXLAN and GENEVE hardware offloading engines that encapsulate and de-capsulate the overlay protocol headers, enabling the traditional offloads to be performed on the encapsulated traffic. With ConnectX-4, data center operators can achieve native performance in the new network architecture.

ASAP2

Mellanox ConnectX-4 EN offers Accelerated Switching And Packet Processing (ASAP2 ) technology to perform offload activities in the hypervisor, including data path, packet parsing, VxLAN and NVGRE encapsulation/decapsulation, and more. ASAP2 allows offloading by handling the data plane in the NIC hardware using SR-IOV, while maintaining the control plane used in today's software-based solutions unmodified. As a result, there is significantly higher performance without the associated CPU load. ASAP2 has two formats: ASAP2 Flex™ and ASAP2 Direct™. One example of a virtual switch that ASAP2 can offload is OpenVSwitch (OVS).

Specifications

- 100GbE / 56GbE / 50GbE / 40GbE / 25GbE /10GbE / 1GbE

- IEEE 802.3bj, 802.3bm 100 Gigabit Ethernet

- 25G Ethernet Consortium 25, 50 Gigabit Ethernet

- IEEE 802.3ba 40 Gigabit Ethernet

- IEEE 802.3ae 10 Gigabit Ethernet

- IEEE 802.3az Energy Efficient Ethernet

- IEEE 802.3ap based auto-negotiation and KRstartup

- Proprietary Ethernet protocols (20/40GBASE-R2, 50/56GBASE-R4)

- IEEE 802.3ad, 802.1AX Link Aggregation

- IEEE 802.1Q, 802.1P VLAN tags and priority

- IEEE 802.1Qau (QCN) - Congestion Notification

- IEEE 802.1Qaz (ETS)

- IEEE 802.1Qbb (PFC)

- IEEE 802.1Qbg

- IEEE 1588v2

- Jumbo frame support (9.6KB)

ENHANCED FEATURES

- Hardware-based reliable transport

- Collective operations offloads

- Vector collective operations offloads

- Mellanox PeerDirect™ RDMA (aka GPUDirect®) communication acceleration

- 64/66 encoding

- Extended Reliable Connected transport (XRC)

- Dynamically Connected transport (DCT)

- Enhanced Atomic operations

- Advanced memory mapping support, allowing user mode registration and remapping of memory (UMR)

- On demand paging (ODP) - registration free RDMA memory access

STORAGE OFFLOADS

- RAID offload - erasure coding (Reed-Solomon) offload

- T10 DIF - Signature handover operation at wire speed, for ingress and egress traffic

OVERLAY NETWORKS

- Stateless offloads for overlay networks and tunneling protocols

- Hardware offload of encapsulation and decapsulation of NVGRE and VXLAN overlay networks

HARDWARE-BASED I/O VIRTUALIZATION

- Single Root IOV

- Multi-function per port

- Address translation and protection

- Multiple queues per virtual machine

- Enhanced QoS for vNICs

- VMware NetQueue support

VIRTUALIZATION

- SR-IOV: Up to 256 Virtual Functions

- SR-IOV: Up to 16 Physical Functions per port

- Virtualization hierarchies (e.g. NPAR)

» Virtualizing Physical Functions on a physical port

» SR-IOV on every Physical Function

- 1K ingress and egress QoS levels

- Guaranteed QoS for VMs

CPU OFFLOADS

- RDMA over Converged Ethernet (RoCE)

- TCP/UDP/IP stateless offload

- LSO, LRO, checksum offload

- RSS (can be done on encapsulated packet), TSS, HDS, VLAN insertion / stripping, Receive flow steering

- Intelligent interrupt coalescence

REMOTE BOOT

- Remote boot over Ethernet

- Remote boot over iSCSI

- PXE and UEFI

PROTOCOL SUPPORT

- OpenMPI, IBM PE, OSU MPI (MVAPICH/2), Intel MPI,

- Platform MPI, UPC, Open SHMEM

- TCP/UDP, MPLS, VxLAN, NVGRE, GENEVE

- iSER, NFS RDMA, SMB Direct

- uDAPL

MANAGEMENT AND CONTROL INTERFACES

- NC-SI, MCTP over SMBus and MCTP over PCIe - Baseboard Management Controller interface

- SDN management interface for managing the eSwitch

- I2C interface for device control and configuration

- General Purpose I/O pins

- SPI interface to Flash

- JTAG IEEE 1149.1 and IEEE 1149.6